We broke our agents, so you don't have to

Master the missing reliability layer in most agent courses: Evals, Observability, & Deployment

If this sounds familiar, you’re not alone:

2025 gave us agent hype. It didn’t give us a reliable way to build them. Most developers are still guessing: which tools to use, how to wire the system, and how to catch failures with evals and monitoring before users do.

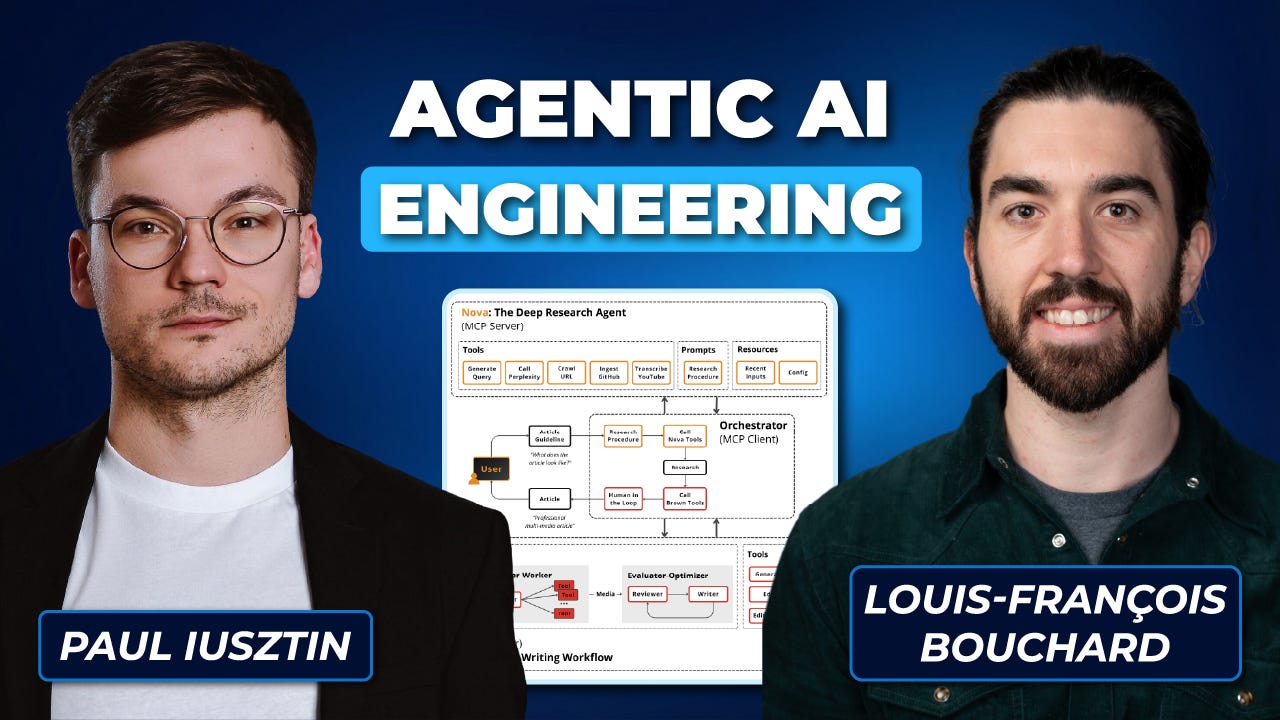

So after nine months of building, breaking, rebuilding, and stress-testing, Agentic AI Engineering is finally live. Our newest course, built together with Paul Iusztin, is designed to teach you how to design, build, evaluate, and deploy autonomous AI systems.

See what you’ll build (syllabus + projects)

Here’s what early students said after going through the material:

“Excellent in depth handling of tradeoffs in evaluating and deploying agent based solutions. A useful mixture of theory and practice, learnt the hard way by expert practitioners.” — Cathal Curtin

“Every AI Engineer needs course like that.” — Ahmed Medhat

“Industry-focused, emphasizing real-world constraints rather than flashy demos, and highly hands-on.” — Abreham Melese

What You Will Build

In the course, you’ll build two agent systems and learn how to keep them reliable when the environment stops being friendly: when tools fail, inputs get messy, latency matters, and “it worked once” isn’t useful.

You’ll build a Research Agent that runs iterative loops, integrates real tools, produces structured artifacts, and supports human-in-the-loop checkpoints with clear stopping conditions. Then you’ll build a Writing Workflow Agent that turns that research into structured, multi-modal outputs using evaluator–optimizer patterns, orchestration, versioning, and state.

But the core of the course is the reliability layer most agent content skips: you’ll design eval datasets, human-in-the-loop processes, implement LLM judges and pass/fail checks, add observability with tracing, and set up monitoring so you can debug regressions quickly and improve the system deliberately, rather than guessing.

Check out the full course details →

Who Is This For?

This is engineering-heavy and opinionated, designed for developers who want depth. You’ll feel at home if you’re comfortable with Python + LLM APIs, have basic cloud familiarity, and don’t mind debugging failures that aren’t clean.

We built the course by starting with a system we’d actually use, pushing it until it broke, then turning those failure modes into the curriculum, refined through 180 alpha testers. The goal is to prepare you for what agents are judged on in 2026: operational reliability—measurable quality, inspectable behavior, and controlled autonomy.

If your goal is to build systems that survive production and the AI era, start here.

The early-bird seats sold out in under a week. The next 100 seats are now $499 (the lowest available price after early bird). You get lifetime access, ongoing updates, Discord access, live introductory calls, and a 30-day refund if you go through the early material and realize it’s not what you need.

Driving directions tools provide smart navigation support for both daily commutes and long-distance travel. As an online route planning tool and map-based navigation tool, they help users calculate driving distance, estimate travel time, and generate turn-by-turn directions quickly. A modern route planning application also functions as a driving route generator, allowing drivers to find the fastest driving route or choose the shortest route based on their preferences. Useful features like a multi-stop route planner and route optimization tool simplify complex journeys. Drivers can avoid tolls and highways while benefiting from real-time route updates for safer, smoother trips. https://drivingdirectionsmap.io

Hopefully you don't have to! Haha