This AI newsletter is all you need #101

What happened this week in AI by Louie

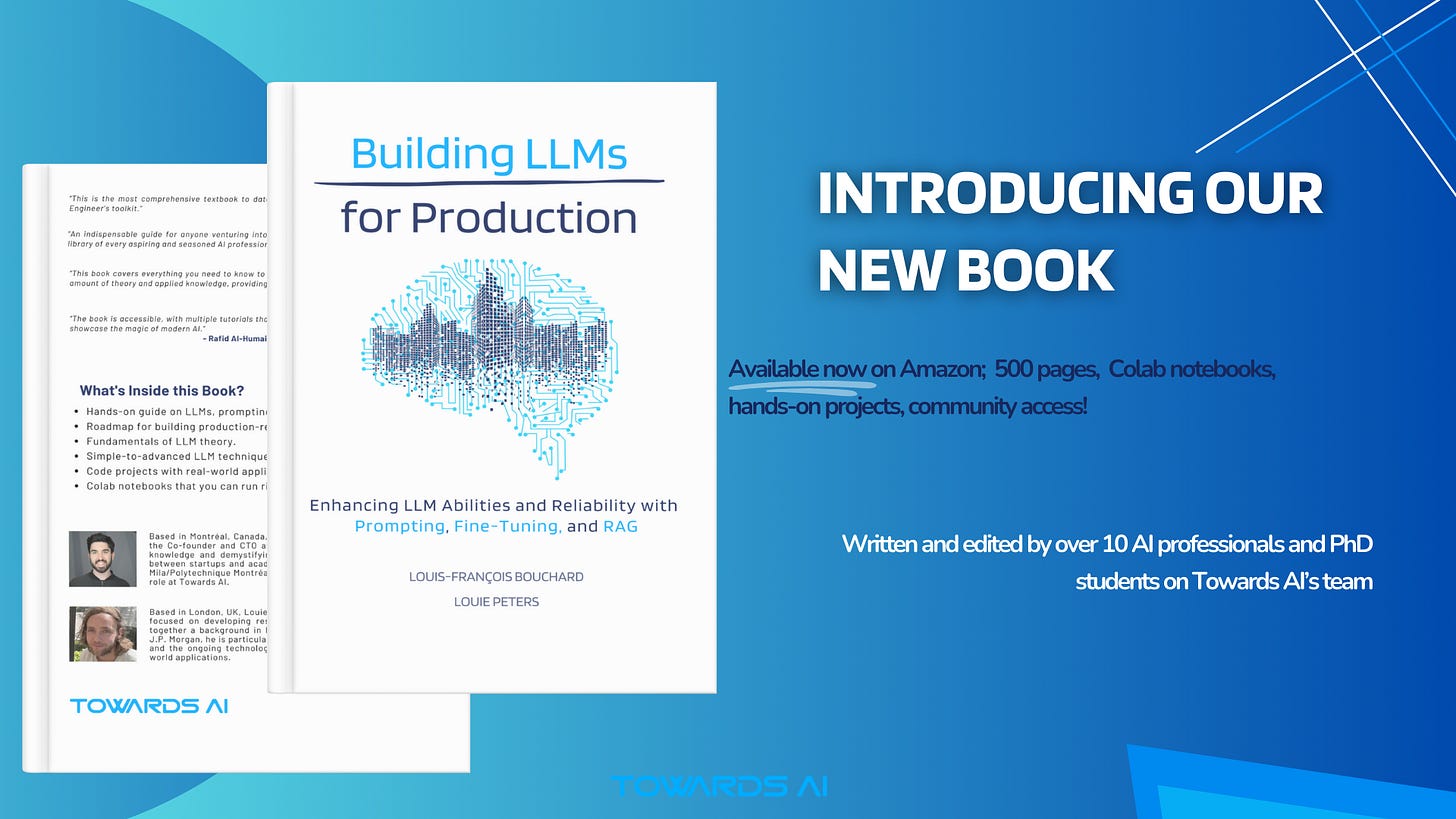

We’ve secretly worked on something for the past year +, and we are now ready to share it with you. With contributions from over a dozen AI experts on our team, industry experts, and even Jerry Liu, founder of LlamaIndex, we are excited to announce our first book, Building LLMs for Production: Enhancing LLM Abilities and Reliability with Prompting, Fine-Tuning, and RAG. Aligning with our goal to make AI more accessible, we have released a 470-page technical book covering everything you need to know about LLMs, from understanding how they work to building powerful applications with them.

Our book builds on the 5,000+ AI tutorials and articles we have published since 2019, particularly many of the materials written for our hugely successful GenAI:360 course in collaboration with Activeloop and Intel. We are super excited to share it with you, and many thanks to our team of 10+ writers, editors, and partners on course and book materials to get us to this launch! Feedback and Amazon reviews would be very helpful!

Focused on practical solutions for real-world challenges, our book covers everything from the basics of LLM concepts to advanced techniques. It’s perfect for building reliable, scalable AI applications. This 470+ page resource dives deep into enhancing LLM abilities with Prompting, Fine-Tuning, and RAG and includes hands-on projects, Colab notebooks, and exclusive community access. Our expert team at Towards AI, along with curated contributions from leaders at Activeloop, Llamaindex, Mila, and more, have tailored this guide for those with intermediate Python knowledge. However, concept explanations are accessible to anyone.

Early feedback has been overwhelmingly positive!

Here’s what industry leaders are saying:

“This is the most comprehensive textbook to date on building LLM applications — all essential topics in an AI Engineer’s toolkit.” — Jerry Liu, Co-founder and CEO of LlamaIndex.

“This book covers everything you need to know to start applying LLMs in a pragmatic way — it balances the right amount of theory and applied knowledge, providing intuitions, use-cases, and code snippets.” — Jeremy Pinto, Senior Applied Research Scientist at Mila.

Get ready to elevate your AI toolkit and start building robust AI applications.

Get your copy of “Building LLMs for Production” today!

Why should you care?

The book will teach you the practical skills that universities don’t! Our goal is to cover this gap so that students and others can get into AI with skills tailored to the industry and start building real projects. The book is packed with theories, concepts, projects, applications, and experience that you can confidently put on your CVs.

Get your copy of “Building LLMs for Production” today and start building robust AI applications!

- Louie Peters — Towards AI Co-founder and CEO

This issue is brought to you thanks to Paragon:

Multi-tenant RAG vs fine-tuning with your customers’ external data

If you are building an AI SaaS application, relying purely on foundational models like Llama 3, GPT-4, or Claude under the hood isn’t good enough — you need to leverage customer-specific data to build tailored solutions. But should you:

Fine-tune the models with customers’ external data

Implement RAG (retrieval augmented generation)

Or do both?

The answer depends on which datasets you have available, how close to real-time you need the context to be, and security considerations. This article tackles this topic in great detail and explains how you should think about each approach. Read the article here: RAG vs. Fine-tuning for Multi-Tenant AI SaaS Applications Guide

Hottest News

1.NVIDIA Announces Financial Results for First Quarter Fiscal 2025

NVIDIA reported revenue of $26.0 billion for the first quarter, up 18% from the previous quarter and 262% from a year ago. Results were driven by H100 chip sales for training and inference of AI models, indicating again the scale of growth in the sector since the launch of Chatgpt.

2. Microsoft Build 2024: Everything Announced

Microsoft announced several new features, including updates to its AI chatbot Copilot, new Microsoft Teams tools, and more. Most notable are the Copilot Agents, AI assistants that promise to “independently and proactively orchestrate tasks for you.” The company also rolled out Phi-3-vision, a new version of the Phi-3 AI model announced in April.

3. Amazon Plans to Give Alexa an AI Overhaul and a Monthly Subscription Price

Amazon is updating Alexa with advanced generative AI capabilities and launching an additional subscription service separate from Prime in an effort to stay competitive with Google and OpenAI’s chatbots, reflecting the company’s strategic emphasis on AI amidst internal and leadership changes.

4. Here’s What’s Really Going On Inside an LLM’s Neural Network

New research from Anthropic offers a new window into what’s going on inside the Claude LLM’s “black box.” The company’s latest paper, “Extracting Interpretable Features from Claude 3 Sonnet,” describes a powerful new method that partially explains how the model’s millions of artificial neurons fire to create surprisingly lifelike responses to general queries.

5. Meta Introduces Chameleon — A Multimodal Model

Meta’s AI research lab just introduced Chameleon, a new family of ‘early-fusion token-based’ AI models that can understand and generate text and images in any order. Chameleon shows the potential for a different type of architecture for multimodal AI models, with its early-fusion approach enabling more seamless reasoning and generation across modalities.

Five 5-minute reads/videos to keep you learning

This is a series of step-by-step video tutorials from Meta to help you get started with their Llama models. It primarily covers how to run Llama 3 on Linux, Windows, and Mac and shows other ways of running it.

2. PaliGemma: Open Source Multimodal Model by Google

Google has introduced PaliGemma, an open-source vision language model with multimodal capabilities that outperforms its contemporaries in object detection and segmentation. This blog walks you through its specifications, capabilities, limitations, use cases, how to fine-tune and deploy it, and more.

3. The Foundation Model Transparency Index After 6 Months

The Foundation Model Transparency Index, launched in October 2023, is an ongoing initiative to measure and improve transparency in the foundation model ecosystem. This article is a follow-up study that finds developers are more transparent with ample room for improvement. Visit our website for the paper and transparency reports.

4. Decoding GPT-4'o’: In-Depth Exploration of Its Mechanisms and Creating Similar AI

OpenAI has launched the groundbreaking AI GPT-4o, a model that combines many models. This blog post discusses how GPT-4o works and how you can create a similar model.

5. GPU Poor Savior: Revolutionizing Low-Bit Open Source LLMs and Cost-Effective Edge Computing

The article explores progress in developing low-bit quantized large language models optimized for edge computing, highlighting the creation of over 200 models that can run on consumer GPUs such as the GTX 3090. These models achieve notable resource efficiency via advanced quantization methods, aided by new tools like Bitorch Engine and green-bit-llm for streamlined training and deployment.

Repositories & Tools

1. Mistral-7B-Instruct-v0.3 is an instruct fine-tuned version of the Mistral-7B-v0.3.

2. Mistral Fine-tune is the official repo to fine-tune Mistral open-source models using LoRA.

3. Perplexica is an AI-powered search engine. It is an open-source alternative to Perplexity AI.

4. Verba is an open-source RAG tool with customizable frameworks.

5. Taipy turns data and AI algorithms into production-ready web applications.

Top Papers of The Week!

1. Retrieval-Augmented Generation for AI-Generated Content: A Survey

This paper reviews existing efforts to integrate the RAG technique into AIGC scenarios. It first classifies RAG foundations according to how the retriever augments the generator, distilling the fundamental abstractions of the augmentation methodologies for various retrievers and generators.

2. Scaling Monosemanticity: Extracting Interpretable Features from Claude 3 Sonnet

The paper reports on successfully scaling sparse autoencoders for extracting diverse, high-quality features from Claude 3 Sonnet, Anthropic’s medium-sized AI model. These features, which are multilingual, multimodal, and highly abstract, include significant safety-relevant aspects such as bias, deception, and security vulnerabilities. Moreover, these features can be used to steer the language models.

3. Chain-of-Thought Reasoning Without Prompting

The study investigates the presence of Chain-of-Thought reasoning in pre-trained large language models by altering the decoding process to consider multiple token options. It reveals that this approach can uncover intrinsic reasoning paths, resulting in an improved understanding of the models’ capabilities and linking reasoning to greater output confidence, as demonstrated across different reasoning benchmarks.

4. Thermodynamic Natural Gradient Descent

The paper presents a novel hybrid digital-analog algorithm that imitates natural gradient descent for neural network training, promising better convergence rates of second-order methods while maintaining computational efficiency akin to first-order methods. Utilizing thermodynamic analog system properties, this approach circumvents the expensive computations typical of current digital techniques.

5. Not All Language Model Features Are Linear

A recent study disputes the linear representation hypothesis in language models by revealing multi-dimensional representations through sparse autoencoders, notably circular representations for time concepts in GPT-2 and Mistral 7B. These representations have proven beneficial for modular arithmetic tasks, and intervention experiments on Mistral 7B and Llama 3 8B underscore their significance in language model computations.

Quick Links

1. Microsoft introduces Phi-Silica, a 3.3B parameter model made for Copilot+ PC NPUs. It will be embedded in all Copilot+ PCs when they go on sale starting in June. Phi-Silica is the fifth and smallest variation of Microsoft’s Phi-3 model.

2. Cohere announced the open weights release of Aya 23, a new family of state-of-the-art multilingual language models. Aya 23 builds on the original model Aya 101 and serves 23 languages.

3. IBM announced it will open-source its Granite AI models and will help Saudi Arabia train an AI system in Arabic. The Granite tools are designed to help software developers complete computer code faster.

Who’s Hiring in AI!

AI Technical Writer and Developer for Large Language Models @Towards AI Inc (Remote)

Software Engineer II, Health Data @Nuna (USA/Remote)

Data Analyst @Simetrik (Remote)

Data Associate @Movement Labs (USA/Remote)

Mid-Level Data Developer, Brazil @CI&T (Brazil/Remote)

Senior Machine Learning Engineer @Tubi (USA/Remote)

Data Scientist (L5) — Games Discovery and Research @Netflix (Los Gatos, California, USA)

Interested in sharing a job opportunity here? Contact sponsors@towardsai.net.

If you are preparing your next machine learning interview, don’t hesitate to check out our leading interview preparation website, confetti!

This AI newsletter is all you need #101 was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Do you plan to make the book available in epub or pdf format for purchasing it at Amazon?