This AI newsletter is all you need #45

What happened this week in AI by Louie

This week witnessed several releases and developments in AI models, continuing the trend of open-source model alternatives. Among these, two popular consumer-facing LLM-based products, ChatGPT, and Github Co-pilot, faced new open-sourced competition with HuggingChat and Replit-Code, respectively. The focus on regulation and AI safety persisted as ChatGPT access was restored in Italy, and AI pioneer Geoffrey Hinton left Google partly due to his concerns about AI safety.

Geoffrey Hinton has played a key role in many of the key breakthroughs in machine learning and the path to transformers and the recent success of LLMs, from backpropagation in 1986 to Alexnet in 2012. Hinton quit Google in part so he could talk more freely about the risks of AI, amid a recent adjustment of his views on the potential of AI and heightening competition between Google and Microsoft. “The idea that this stuff could actually get smarter than people — a few people believed that,” “But most people thought it was way off. And I thought it was way off. I thought it was 30 to 50 years or even longer away. Obviously, I no longer think that.”

Hinton’s changing views and his increased perception of AI risk are a testament to the rapid progress in AI over the past year and illustrate just how little we really know about LLMs and what progress or roadblocks we might find even six months ahead. While we find many arguments over both AI’s positive potential and risks (or lack thereof) are made with overconfidence given still huge uncertainty, Hinton’s deep and earned respect in the community and flexible and measured views carry particular weight.

Towards AI and Learn Prompting are Announcing the HackAPrompt competition!

We are thrilled to announce the first-ever prompt hacking competition, HackAPrompt, aimed at enhancing AI safety. In this competition, participants will try to hack as many prompts as possible by injecting, leaking, and defeating the sandwich defense. The competition is designed to be beginner-friendly, welcoming even non-technical people to participate.

Register now to win prizes worth over $35,000!

Launching on: May 5th at 6:00 PM EST

Hottest News

Hugging Face has launched a new open-source chatbot model named HuggingChat, which is considered an alternative to OpenAI’s ChatGPT. Users can test the model via a web interface, and it can also be integrated into existing apps and services through Hugging Face’s API.

2. From DrakeGPT to Infinite Grimes, AI-generated music strikes a chord

Last week, a song that used AI deepfakes of Drake and the Weeknd’s voices gained popularity. In the meantime, Grimes has announced on Twitter that she will offer 50% royalties for any AI-generated song that features her voice. Musicians such as Holly Herndon and YACHT have adopted AI as a tool to push the boundaries of their creativity.

3. Amazon plan to improve Alexa using large language models

During Amazon’s first-quarter earnings call, CEO Andy Jassy announced that the company is developing a more advanced large language model (LLM) to power Alexa. Jassy stated that the upgraded LLM will be “more generalized and capable,” and that it will help Amazon reach its goal of creating “the world’s best personal assistant.”

4. ChatGPT Will See You Now: Doctors Using AI to Answer Patient Questions

UC San Diego Health, UW Health, and Stanford Health Care are collaborating to test a tool that leverages OpenAI’s GPT to read patient messages and generate draft responses for their doctors. The pilot program’s goal is to determine if AI can reduce the amount of time that medical staff spends responding to patients’ online inquiries.

5. Replit introduced replit-code-v1–3b on ReplitDevDay

During #ReplitDevDay, Replit unveiled replit-code-v1–3b, a new AI language model with 2.7 billion parameters. The model was trained on 525 billion tokens in just 10 days and demonstrates 40% better performance than similar models.

Five 5-minute reads/videos to keep you learning

The article discusses the trend of “autonomous agents” among AI developers. It covers various topics such as the definition of autonomous agents, the opportunities they present, how they work, their potential future, and ways to build or use them. It also provides insights on how to connect with like-minded people interested in autonomous agents.

2. Why do we need RL for language models?

The argument for RL is based on knowledge-seeking queries where we expect truthful answers, and the ability of the model to respond with “I don’t know” or refuse to answer in situations where it is uncertain. For this type of interaction, RL training must be used as supervised training may teach the model to lie.

3. The future of generative AI is niche, not generalized

ChatGPT has led to speculation about artificial general intelligence (AGI). However, the next phase of AI will likely be in specific domains and contexts. The true value of these systems lies not in their use as generalist chatbots, but rather as a class of tools that can be applied to niche domains, offering new ways to discover and explore highly specific information.

4. Navigating the High Cost of AI Compute

This post presents a framework for analyzing the cost factors of an AI company. It covers the time and costs involved in building an AI system, provides a comparative analysis of CSPs and GPUs, and discusses how the costs are likely to evolve over time.

5. Introducing Hidet: A Deep Learning Compiler for Efficient Model Serving

Hidet is a deep learning compiler that simplifies the process of implementing high-performing deep learning operators on modern accelerators like NVIDIA GPUs. It originated from a research project led by the EcoSystem lab at the University of Toronto (UofT) in collaboration with AWS.

Papers & Repositories

AudioGPT is a resource designed for audio applications and tasks such as speech recognition, synthesis, sound detection, and talking head synthesis. However, at present, this model is only available for non-commercial use.

2. Harnessing the Power of LLMs in Practice: A Survey on ChatGPT and Beyond

This paper offers a comprehensive and practical guide for practitioners and end-users who work with LLMs in their NLP tasks. Additionally, it provides researchers and practitioners with valuable insights and best practices for working with LLMs.

3. Learning Agile Soccer Skills for a Bipedal Robot with Deep Reinforcement Learning

This paper examines whether Deep RL can generate complex and safe movement skills at a low cost. While the agents were optimized for scoring, in experiments they walked 156% faster, took 63% less time to get up, and kicked 24% faster than a scripted baseline.

4. Are Emergent Abilities of Large Language Models a Mirage?

This paper proposes an alternative explanation for emergent abilities in artificial intelligence (AI). Specifically, it suggests that for a given task and model family, the choice of metric used to analyze fixed model outputs can lead to the inference of an emergent ability or the lack thereof. The analysis conducted provides compelling evidence to suggest that emergent abilities may not be a fundamental property of scaling AI models.

5. Controlled Text Generation with Natural Language Instructions

This paper introduces InstructCTG, a framework for generating controlled text that incorporates various constraints. This is achieved by conditioning natural language descriptions and demonstrations of the constraints.

Enjoy these papers and news summaries? Get a daily recap in your inbox!

The Learn AI Together Community section!

Weekly AI Podcast

In this week’s episode of the “What’s AI” podcast, Louis Bouchard interviews Limarc Ambalina, VP at HackerNoon, to discuss the impact of AI on writing and journalism. Limarc shares his journey and offers advice for aspiring writers. The episode covers topics such as AI-generated content, how it differs from AI-edited content, how AI can aid writers, and ethics surrounding AI-generated content. Tune in to the podcast for insights on content writing with AI on HackerNoon and more. You can find the podcast on YouTube, Spotify, or Apple Podcasts.

Upcoming Community Events

The Learn AI Together Discord community hosts weekly AI seminars to help the community learn from industry experts, ask questions, and get a deeper insight into the latest research in AI. Join us for free, interactive video sessions hosted live on Discord weekly by attending our upcoming events.

HackAPrompt is aimed at enhancing AI safety. In this competition, participants will try to hack as many prompts as possible by injecting, leaking, and defeating the sandwich defense. The competition is designed to be beginner-friendly, welcoming even non-technical people to participate.

Date & Time: 5th May 7:00 pm EST

2. Prompt Engineering Mastermind

This event is designed to help participants learn how to iterate and enhance ChatGPT prompts. Greg will share his screen displaying ChatGPT, and he will paste a prompt from a member of the audience. Then, we will work together to improve the prompt. This collaborative seminar invites suggestions from the chat participants and shares the tips and techniques that Greg has learned.

Date & Time: 4th May 1:00 pm EST

3. ChatGPT and Google Maps Hands-on Workshop

@george.balatinski is hosting a workshop to harness the power of ChatGPT to create real-world projects for the browser using JavaScript, CSS, and HTML. The interactive sessions are focused on building a portfolio, guiding you to create a compelling showcase of your talents, projects, and achievements. Engage in pair programming exercises, work side-by-side with fellow developers, and foster hands-on learning and knowledge sharing. In addition, we offer valuable networking opportunities with our and other communities in Web and AI. Discover how to seamlessly convert code to popular frameworks like Angular and React and tap into the limitless potential of AI-driven development. Don’t miss this chance to elevate your web development expertise and stay ahead of the curve. Join us at our next meetup here and experience the future of coding with ChatGPT! You can get familiar with some of the additional content here.

Date & Time: 2nd June, 12:00 pm EST

Add our Google calendar to see all our free AI events!

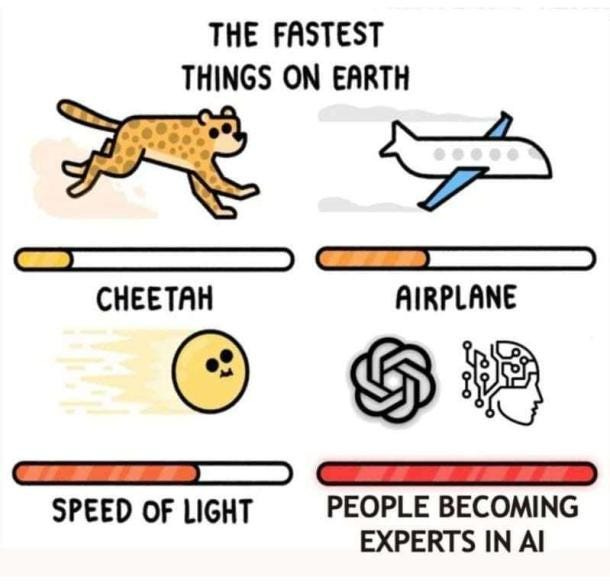

Meme of the week!

Meme shared by dimkiriakos#2286

Featured Community post from the Discord

Jorge.jgnz#2451 has trained a Video Diffusion model (DDPM+time) using a synthetic dataset of small fluid simulations, which is now available on HuggingFace. He has implemented temporal inpainting to perform video prediction by masking the first half of the video and allowing the model to predict the second half. You can find the dataset on HuggingFace and support a fellow community member. Share your feedback or questions in the thread here.

AI poll of the week!

Join the discussion on Discord

TAI Curated section

Article of the week

How to Train Neural Networks With Fewer Data Using Active Learning by Leon Eversberg

This article covers the current state of the art in deep active learning by outlining Active Learning. One of the biggest problems in supervised deep learning is the scarcity of labeled training data. This is where active learning comes in. Not all training data samples are equally valuable to the training process, by selecting only the most valuable training samples, active learning attempts to minimize the amount of labeled training data required.

Our must-read articles

From Detection to Correction: How to Keep Your Production Data Clean and Reliable by Youssef Hosni

Mastering Linear Regression: A Step-by-Step Guide by Anushka sonawane

PyTorch Lightning: An Introduction to the Lightning-Fast Deep Learning Framework by Anay Dongre

If you are interested in publishing with Towards AI, check our guidelines and sign up. We will publish your work to our network if it meets our editorial policies and standards.

Job offers

Machine Learning Engineer, Multimodal Generation — EMEA @HuggingFace (Remote)

Applied Machine Learning Engineer @Snorkel AI (Remote)

Software Engineer, Model Inference @Open AI (San Francisco, CA, USA)

Data Scientist, Content @Patreon (San Francisco, CA, USA)

Machine Learning Researcher @Figma (San Francisco/New York, USA)

Interested in sharing a job opportunity here? Contact sponsors@towardsai.net.

If you are preparing your next machine learning interview, don’t hesitate to check out our leading interview preparation website, confetti!

This AI newsletter is all you need #45 was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.