This AI newsletter is all you need #40

What happened this week in AI by Louis

With the surging demand for generative AI, this week saw preparatory developments for the next wave of AI. Companies are fast-tracking the development of AI products, and generative AI tools are closer to becoming consumer products than ever before. They are already becoming powerful assistants for writers and programmers and rapidly taking on more challenges. The open-source community is also making significant progress in running local LLMs.

For instance, Facebook’s LLama model has continued to be a focal point for building in the academic and open source community following the leaked weights on 4Chan. One outcome of this is the development of llama.cpp, authored by the creator of whisper.cpp. This new tool has demonstrated that it is possible to run an LLM on an M1 Mac. Following the recent Llama Alpaca model Databricks has also recently released Dolly, which can equip an outdated open-source large language model (LLM) with ChatGPT-like instruction-following abilities. This is done by training it on high-quality data for 30 minutes on a single machine. This ability doesn’t require the latest or largest models, as Dolly only has 6 billion parameters, much less than GPT-3’s 175 billion. The code for Dolly is open-sourced, and the paper outlines how to create it on Databricks. Models like Llama Alpaca and Dolly may help to democratize LLMs, making them an accessible commodity that can be tailored to every company’s needs.

With the pace of generative AI development, Nvidia has accelerated the rollout of new hardware as well as a new AI cloud business model. NVIDIA’s latest product announcements at GTC have positioned the company’s AI compute platforms, such as the NVIDIA H100 NVL, L4, L40, and Grace Hopper to meet the growing demand for AI and are tailored for specific training and inferencing tasks.

OpenAI has continued its rapid execution of news and feature releases with the announcement of ChatGPT plugins. These plugins can significantly enhance the capabilities of GPT 3 and 4 with browsing, information retrieval, code execution, and also third-party plugins. This has fueled lots of speculation on whether these plugins will become the new “App store” for LLMs or even something more, for example, the excellent blog from Not Boring — OpenAI’s ChatGPT Plugins and the emergence of an Apex Aggregator.

The increased availability of generative AI-specific compute, the progress towards open-source tools, the increased capabilities of leading LLMs and a product-oriented approach to generative AI applications indicate that we have entered a new phase in the commercialization of AI. This is where AI is revealing more of its true power to revolutionize the way we work, interact, create, play, and engage with technology, information, and people. Although current leading LLM AI models may still be challenging to run on personal hardware, we might soon see a demo showcasing an open-source model running on a phone.

Hottest News

OpenAI is currently testing new ChatGPT plugins with select users before launching them for widespread use. These plugins are tools that are specifically designed for language models, with safety as a core principle. They assist ChatGPT in accessing up-to-date information, running computations, and utilizing third-party services.

2. Stable Diffusion Reimagine — Stability AI

Stability AI has recently launched Stable Diffusion Reimagine, a new Clipdrop tool that enables users to generate multiple variations of a single image without any limitations. However, the tool has certain limitations and biases, but the company has plans to open-source it soon to make further improvements.

3. GitHub Copilot X: The AI-powered developer experience

GitHub has launched GitHub Copilot X, which leverages OpenAI’s GPT-4 to provide a chat interface and voice-to-code AI for developers. This new developer experience enables Copilot to work with pull requests, command lines, and documentation to answer questions about your projects.

Bard, a Google AI experiment, aims to enhance creativity and productivity by collaborating with users. It employs generative AI technology to facilitate collaboration. Bard includes safety controls and feedback mechanisms; however, it may occasionally display inaccurate information or offensive statements.

A recent paper from Tatsu Lab introduced Alpaca, an “instruction-tuned” version of Facebook’s Llama model. The post details the release of Alpaca-30B, the benefits of fine-tuning, usage instructions, and community involvement.

Three 5-minute reads/videos to keep you learning

GNNs, or graph neural networks, are powerful tools for processing graph data and understanding entity relationships. They have seen exponential growth in published research and are expected to continue to rise in popularity. This article will recap some of the highly impactful applications of GNNs and provide an overview of what to expect for the next big wave in AI.

2. Using AI to make teaching easier & more impactful

AI has been shown to enhance learning in classrooms by offering tailored experiences, generating examples, providing feedback, creating study groups, and conducting diagnostic tests. This article provides a summary of the five effective teaching strategies discussed in the paper titled “Using AI to Implement Effective Teaching Strategies in Classrooms: Five Strategies, Including Prompts”.

3. ChatGPT + Code Interpreter = Magic

OpenAI’s ChatGPT has expanded its capabilities with the integration of third-party tools, including a Code Interpreter that enables the generation and execution of code, data analysis, and spreadsheet graphing. This article explores the new features and expanded capabilities of ChatGPT, providing a range of examples for integrating tools such as the Code Interpreter.

The advancements in AI are set to revolutionize various aspects of life, including work, education, healthcare, travel, and communication. This article delves into the opportunities and responsibilities that come with the Age of AI and discusses its impact on industries such as healthcare and education.

5. Getting started with Pytorch 2.0 and Hugging Face Transformers

This blog post offers a comprehensive guide on how to leverage the new features of PyTorch 2.0, such as TorchDynamo, AOTAutograd, PrimTorch, and TorchInductor, to enhance performance. It also provides step-by-step instructions on how to use PyTorch 2.0 and Hugging Face Transformers to fine-tune a BERT model for text classification.

Papers & Repositories

Dolly, a large language model trained on the Databricks Machine Learning Platform, shows that a two-year-old open-source model like GPT-J can exhibit high-quality instruction following behavior. Fine-tuning Dolly for just 30 minutes on a focused corpus of 50k records (Stanford Alpaca) demonstrates an impressive level of performance not characteristic of the foundation model on which it is based.

2. Sparks of Artificial General Intelligence

This paper investigates the early version of GPT-4 during its active development at OpenAI. The focus of this report is on identifying its limitations and the challenges that lie ahead in advancing toward deeper and more comprehensive versions of AGI. It also explores the possibility of pursuing a new paradigm that goes beyond next-word prediction.

3. GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models

This paper explores the potential implications of large language models (LLMs), specifically Generative Pre-trained Transformers (GPTs), on the U.S. labor market. The study found that GPTs could potentially displace tasks performed by up to 10% of workers, with 80% of workers facing some level of displacement, and 19% experiencing more than 50% displacement.

4. MM-ReAct: Prompting ChatGPT for Multimodal Reasoning and Action

This paper presents MM-REACT, which integrates ChatGPT with a pool of vision experts to achieve multimodal reasoning and action. It explores advanced vision tasks that may exceed the capabilities of existing models and introduces a textual prompt design that represents text descriptions, textualized spatial coordinates, and dense visual signals, such as images and videos, as aligned file names.

5. Vid2Seq: a pretrained visual language model for describing multi-event videos

Google has introduced a paper titled “Vid2Seq: Large-Scale Pretraining of a Visual Language Model for Dense Video Captioning” which will appear at CVPR 2023. The paper describes the Vid2Seq architecture, which adds time tokens to a language model, enabling it to predict event boundaries and textual descriptions in the same output sequence.

The Learn AI Together Community section!

Upcoming Community Events

The Learn AI Together Discord community hosts weekly AI seminars to help the community learn from industry experts, ask questions, and get a deeper insight into the latest research in AI. Join us for free, interactive video sessions hosted live on Discord weekly by attending our upcoming events.

This week, emptyshore will present the neural network architecture described in the paper “3D-PMRNN: Reconstructing three-dimensional porous media from two-dimensional images with recurrent neural network” during a seminar. The session will not be recorded, so join us live for the first seminar here or add it to your calendar here!

Date & Time: 28th March, 9:00 pm EST

Add our Google calendar to see all our free AI events!

Meme of the week!

Meme shared by Recruiter6061#8864

Featured Community post from the Discord

We’re excited to share that FromCommunity has seen tremendous success since we enabled invite-only access just 2 months ago and received over 2,500 job applications from talented developers from amazing tech communities. FromCommunity helps companies hire directly from open-source and Tech communities. And now, with the help of ChatGPT, we’re expanding our platform’s capabilities by evaluating candidates’ coding skills and automating our communication with them. Find us on ProductHunt, your support, and feedback would mean the world to us!

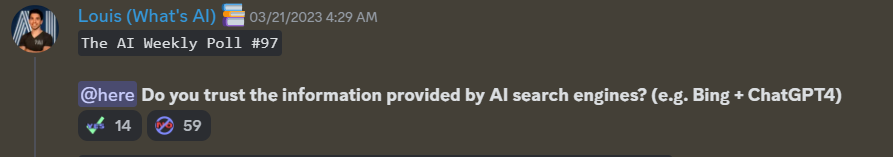

AI poll of the week!

Join the discussion on Discord.

TAI Curated section

Article of the week

Drift Detection Using TorchDrift for Tabular and Time-series Data by Rahul Veettil

Machine learning models are created to make predictions based on data. However, the data in the real world is constantly changing, and this can affect the model’s accuracy. This phenomenon is known as data drift, which can cause incorrect predictions and poor performance. In this blog post, the author discusses how to detect data drift using the Python library TorchDrift.

Our must-read articles

Correlation and Causation: What are the Differences? by Cornellius Yudha Wijaya

Unlocking New Insights with Vision Transformer by Anay Dongre

If you want to publish with Towards AI, check our guidelines and sign up. We will publish your work to our network if it meets our editorial policies and standards.

Job offers

Lead Data Engineer, Kafka @ASAPP (Hybrid/Bangalore, India)

Senior DevOps Engineer @Labelbox (Remote)

Senior Software Engineer @Mercari (Remote)

Director/ Sr. Director, Scientific Data Design @Treeline Biosciences (Remote)

Senior Data Engineer @Grindr (Remote)

Senior AI Software Engineer @ Spot AI (Remote)

Interested in sharing a job opportunity here? Contact sponsors@towardsai.net.

If you are preparing your next machine learning interview, don’t hesitate to check out our leading interview preparation website, confetti!

This AI newsletter is all you need #40 was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.