This AI newsletter is all you need #72

What happened this week in AI by Louie

This week, AI news was dominated by OpenAI’s Devday and the launch of many new models and features, which drowned out Elon Musk’s earlier entry into the LLM race with xAI’s Grok GPT-3 class model. OpenAI’s Devday included the launch of a new, better, faster, and cheaper GPT-4 Turbo model, vision capability via API, an integrated retrieval engine, as well as API integration with several other models (Dalle-3, a new Whisper speech-to-text and new text to speech models). But the event’s surprise was the release of “GPTs” — a no-code solution and future “GPT Store” app store for people to build and monetize their own custom GPT agents via ChatGPT.

This latest set of releases from Open AI leaves us wondering, could this be the “ChatGPT” moment of GPT-4 class models? The initial ChatGPT launch involved the iterative improvement of GPT-3 class models together with significant improvements to UI that allowed for the widespread adoption of GPT-3 for chatbot applications. This latest set of releases has a similar feel but this time for GPT-4 class models and instead for the adoption of LLM RAG and agent applications, where a more easy to use agent building UI, faster and more affordable models allow the LLMs to reach a new potential.

Following the event, we noted the conversation focussed on 1) Will OpenAI’s aggressive pricing and rolling in increased functionalities threaten other AI startups and “GPT wrappers” and 2) Will OpenAI create a new AppStore ecosystem with the new GPT product? For the first point, we have sympathetic arguments on both sides. For example, OpenAI has caught up with Anthropic’s Claude 2 on its previously differentiating longer context length (and at a slightly lower price), released a text-to-speech API significantly cheaper than Eleven Labs whilst also encroaching on the territory of Document processing and Retrieval Augmented Generation (RAG) ecosystem players such as Langchain. At the same time, however, it has released an extremely powerful toolkit for building much more powerful LLM and RAG products with a better GPT-4 and many new models available via API. They have also made it much easier to build on top of OpenAI models and significantly lowered barriers to entry for people wanting to build their own projects.

- Louie Peters — Towards AI Co-founder and CEO

Hottest News

1. All the news from OpenAI’s first developer conference

OpenAI’s Devday included the launch of a new, better, faster, and cheaper GPT-4 Turbo model, vision capability via API, an integrated retrieval engine, as well as API integration with several other models. But the event’s surprise was the release of “GPTs” — a no-code solution and future “GPT Store” app store.

2. RedPajama-Data-v2: An Open Dataset With 30 Trillion Tokens for Training LLMs

RedPajama-Data-V2, the largest public training dataset for language model research, is a cleaned dataset comprising 30 trillion tokens from 84 CommonCrawl dumps in five major languages. It includes pre-computed quality annotations for filtering and weighting purposes and is now available for research and commercial use.

3. Elon Musk’s First AI Product Is a Chatbot Named Grok

Elon Musk’s AI startup xAI has launched its first chatbot, Grok, which will be available to X Premium+ subscribers. The Grok team includes AI specialists from DeepMind, OpenAI, Google, Microsoft, and Tesla. Musk highlights that Grok’s ability to access real-time information on the X platform gives it an edge over other chatbots.

4. A New Beatles Song Is Set for Release After 45 Years — With Help From AI

A new Beatles song featuring the complete Fab Four was released 45 years after John Lennon began writing it — with the help of artificial intelligence. This opens possibilities for reviving more old recordings or even creating new music, but it also poses ethical questions about consent and art manipulation.

5. A Glimpse of the Next Generation of AlphaFold

AlphaFold, an advanced AI model, accurately predicts molecules in the Protein Data Bank, improving understanding of biomolecules and supporting research in complex protein structures. It has potential applications in cancer drug discovery, vaccine development, and pollution reduction.

Five 5-minute reads/videos to keep you learning

Hugging Face has released a set of alignment guides in their Alignment Handbook for language model training. The guides cover techniques such as supervised fine-tuning, reward modeling, rejection sampling, and direct preference optimization (DPO) to enhance language model performance.

2. How AI Detectors Can Destroy Innocent Writers’ Livelihoods

The massive false positive rate of general AI detectors had a devastating effect on freelance writer Michael Berben: being falsely accused of cheating, he lost his job. The article sheds light on the common false positives and lack of effective mechanisms for challenging AI detectors in the field.

3. AI + APIs — What 12 Experts Think the Future Holds

The convergence of AI and APIs is revolutionizing the tech world. Startups leveraging these tools can challenge established giants and reshape power dynamics in the digital economy. This essay highlights the thoughts and opinions of 12 experts on the opportunity that sits at the crossroads of AI and APIs.

4. After 500+ LoRAs Made, Here Is the Secret

This blog emphasizes the significance of quality datasets and parameter optimization in maximizing the efficiency of LoRAs. It highlights the importance of a clear dataset and recommends using a 33B model for better finetuning. Additionally, it cautions about potential impacts on quality from gradient accumulation.

5. What Is Multimodal Artificial Intelligence (AI)?

The guide explains the concept of multimodal artificial intelligence (AI) and its transformative impact on various fields. It explores the practical applications of multimodal AI, discusses fusion techniques, and offers a concise glossary of key terms in this domain.

Papers & Repositories

1. Zephyr: Direct Distillation of LM Alignment

The Zephyr 7B, developed by Hugging Face, has achieved impressive results by surpassing the Chat Llama 70B in various benchmarks. Its training approach involves dataset construction, fine-tuning, AI feedback collection, and preference optimization.

Distil-Whisper is an impressive AI model that offers faster inference speed and reduced size compared to Whisper. It performs well in noisy environments and exhibits lower word repetition and insertion errors. The model utilizes an innovative distillation process trained on a large and diverse dataset, ensuring robustness across various domains.

3. LLMs may Dominate Information Access: Neural Retrievers are Biased Towards LLM-Generated Texts

This work conducts a quantitative evaluation of different IR models with human-written and LLM-generated texts. Search engines tend to favor LLM-generated texts over human-written ones. This raises concerns about source bias and calls for further exploration and evaluation in the era of LLM.

4. Is ChatGPT Good at Search? Investigating Large Language Models as Re-Ranking Agents

This paper investigates generative LLMs such as ChatGPT and GPT-4 for relevance ranking in Information Retrieval. It shows that LLMs, when guided effectively, can achieve better results than state-of-the-art supervised methods on information retrieval benchmarks.

5. Large Language Models Understand and Can Be Enhanced by Emotional Stimuli

This paper takes the first step towards exploring the ability of LLMs to understand emotional stimuli. It found that providing specific phrases to the GPT-4 AI model improved its performance. Using “EmotionPrompts” resulted in higher-quality outputs, with an improvement of 8% during instruction induction and 115% on higher-stakes tasks.

Enjoy these papers and news summaries? Get a daily recap in your inbox!

The Learn AI Together Community section!

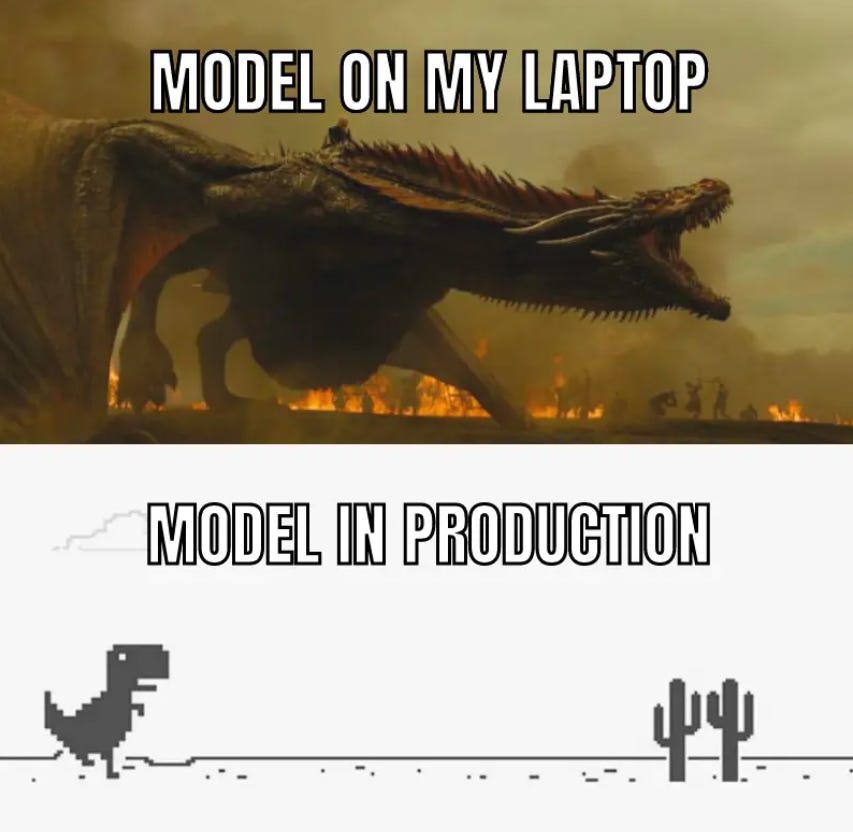

Meme of the week!

Meme shared by rucha8062

Featured Community post from the Discord

Henry has just launched DearFlow for beta testing! It’s an all-in-one platform for you to create and discover AI use cases (chatbots and workflows). It combines the power of FlowGPT with Notion. It allows users to execute complex workflows that chat interfaces like ChatGPT cannot handle. Check it out here and support a fellow community member! Share your thoughts and feedback in the thread here.

AI poll of the week

Tell us how you are able to boost your productivity or leverage them for your current job! Join the discussion on Discord.

TAI Curated section

Article of the week

Top Important LLM Papers for the Week from 23/10 to 29/10 by Youssef Hosni

Large language models (LLMs) have advanced rapidly in recent years. As new generations of models are developed, it’s essential for researchers and engineers to stay informed on the latest progress. This article summarizes some of the most important LLM papers published during the Fourth week of October.

Our must-read articles

Is it Possible to Prove the Simulation Hypothesis? by Lee Vaughan

Enhancing The Robustness of Regression Model with Time-Series Analysis — Part 1 by Mirza Anandita

A Complete Guide for Creating an AI Assistant for Summarizing YouTube Videos — Part 2 by Amin Kamali

If you are interested in publishing with Towards AI, check our guidelines and sign up. We will publish your work to our network if it meets our editorial policies and standards.

Job offers

Data Engineer @Pearl Technologies (Remote)

Robotics Software Intern 2024 @Rapyuta Robotics (Japan)

Mobile Engineer, Full Stack (LLM/GenAI) @Mercari, inc. (Remote)

Data Analytics Manager @Humanforce (Sydney, Australia)

Quantitative Developer — Temporary @Twine (Remote)

QA Engineer @CRISP (London, UK)

Python Interns (Mumbai) @Docsumo (Mumbai, India)

Interested in sharing a job opportunity here? Contact sponsors@towardsai.net.

If you are preparing your next machine learning interview, don’t hesitate to check out our leading interview preparation website, confetti!

This AI newsletter is all you need #72 was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

AI is changing the way people create, learn, and experience content online. The rise of custom GPTs and advanced audio tools reminds me of how immersive full audio storytelling has become at https://truyenfullaudio.net/