TAI #204: Are AI Agents Starting A Cybersecurity Arms Race?

Also, Anthropic’s xAI deal, GPT-Realtime-2, ZAYA1-8B and more

What happened this week in AI by Louie

This week gave us the clearest picture yet of how large a mark AI agents will leave on cybersecurity. Mozilla published the best engineering write-up so far on how Claude Mythos Preview helped harden Firefox. OpenAI launched Daybreak and expanded GPT-5.5-Cyber access for vetted defenders. Google Threat Intelligence Group reported its first high-confidence case of a threat actor using an AI-developed zero-day exploit. Mini Shai-Hulud, a self-spreading npm supply-chain worm, turned trusted release automation into a malware distribution system.

AI agents are pushing both attackers and defenders from manual security work to agentic workflows. Attackers can ask agents to profile targets, inspect code, validate proof-of-concepts, tailor phishing lures, and operate across developer infrastructure. Defenders can ask agents to scan codebases, reproduce bugs, validate patches, generate detections, and explain what happened from logs.

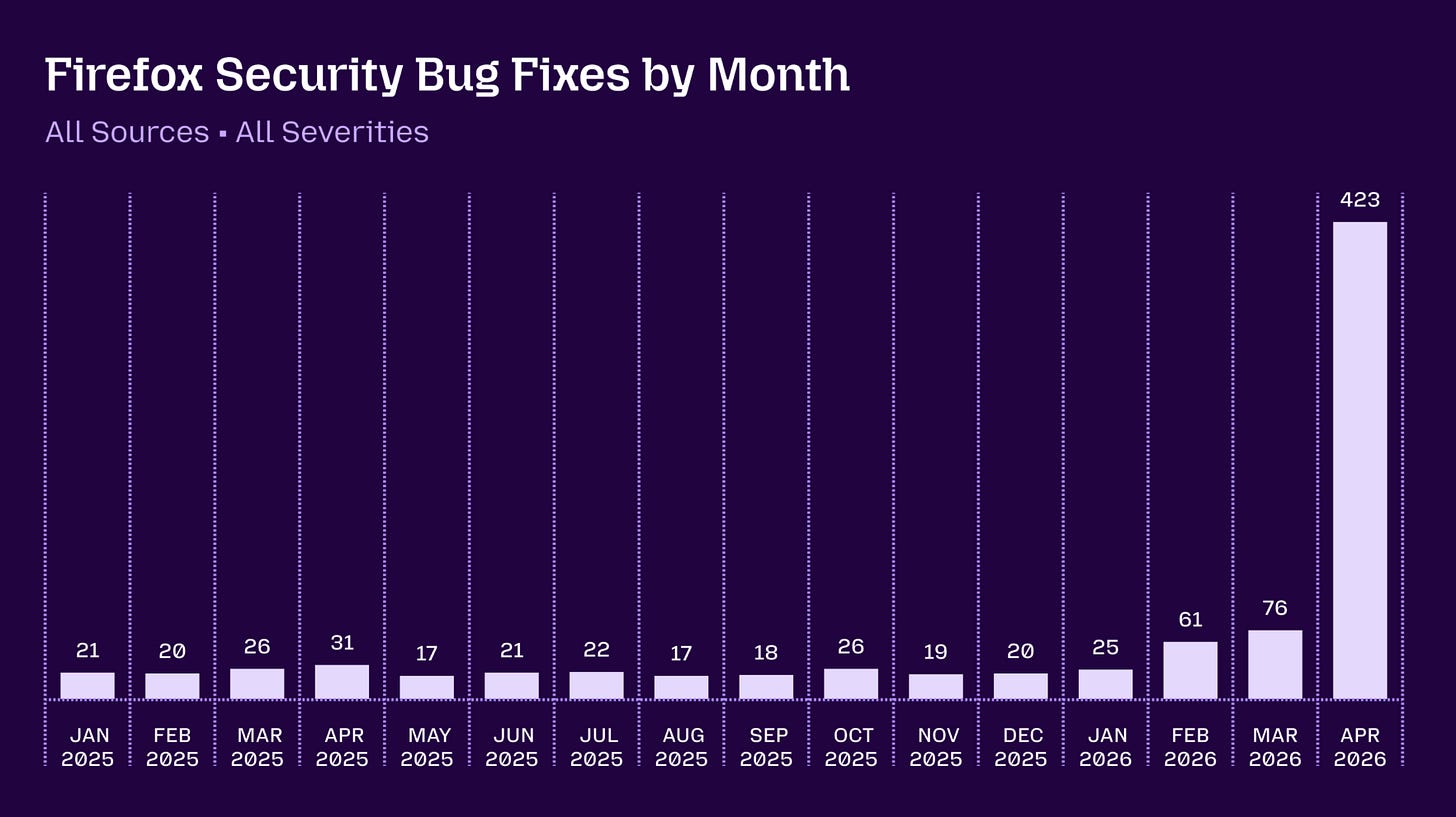

The Firefox work is the strongest defensive example yet. Mozilla says Firefox 150 included fixes for 271 vulnerabilities identified during its initial Claude Mythos Preview evaluation, 180 of them high severity. April saw 423 total Firefox security bug fixes against a 2025 baseline of roughly 20 to 30 per month. That is an order-of-magnitude operational shift for one of the most heavily tested codebases in the world.

These are security bugs and vulnerabilities. Mozilla notes that compromising modern Firefox typically requires chaining multiple bugs because of process sandboxing and other mitigations, so the 271 figure reflects discovery throughput and most findings cannot deliver a full compromise on their own. Nevertheless the step change in throughput is dramatic and a real indicator that Mythos is not just hype.

The result came from an engineering system around the model. Mozilla built an agentic harness on top of its fuzzing infrastructure. It generated reproducible test cases, tested hypotheses, dismissed unreproducible speculation, deduplicated findings, and routed bugs through the normal security lifecycle to engineers who could patch and release. A model that emits plausible bug reports can overwhelm maintainers. A model inside a project-specific reproduction and patch pipeline becomes a defensive machine.

OpenAI’s cyber response is called Daybreak and uses Codex as the agentic harness together with security partners for secure code review, threat modeling, patch validation, dependency risk analysis, detection, and remediation. GPT-5.5 with Trusted Access for Cyber reduces refusals for verified defensive work. GPT-5.5-Cyber is a more permissive limited preview for authorized red teaming, penetration testing, and controlled validation. OpenAI describes the first preview primarily as an access and permissions change.

Offensive evidence is moving the same direction. Google Threat Intelligence Group identified a threat actor using a zero-day it believes was AI-developed. The exploit targeted a popular open-source web administration tool, bypassed two-factor authentication after credential theft, and was disrupted before mass exploitation. Google’s forensic tells included educational docstrings, a hallucinated Common Vulnerability Scoring System score, and textbook-style Python formatting.

Google also described PROMPTSPY, an Android backdoor with a module that sends the visible interface hierarchy to a model and receives structured actions such as clicks and swipes. The model reads the victim environment, reasons about the operator’s goal, and turns screen state into device actions. Google further observed threat actors using models for organizational mapping, hardware fingerprinting from photos, and high-volume vulnerability prompt loops.

Mini Shai-Hulud is the supply-chain counterpart and a self-spreading worm in the npm JavaScript package ecosystem, building on the earlier Shai-Hulud npm worm. Once it compromises a single package, the malicious code uses that maintainer’s credentials to publish poisoned updates of every other package they own, harvests credentials from each new victim machine, and repeats the cycle. The May 11 campaign hijacked OpenID Connect tokens from GitHub Actions release pipelines.

The attack also treated developer and AI environments as targets. The malware harvested credentials from cloud accounts, crypto wallets, Claude Code configuration, and VS Code persistence hooks. Agents sit close to code, credentials, repositories, local files, browsers, and tool permissions. Once they become routine developer infrastructure, compromising the agent layer is as valuable as compromising the developer laptop.

The practical defense for npm right now is to stop installing same-day releases. Most automated supply-chain attacks are detected and pulled within 24 to 72 hours, so a 3 to 7 day cooldown on dependency updates closes the largest exposure window at almost no cost. But this feels like a very brittle solution to a whole new class of vulnerabilities; particularly as coding agent environments get targeted.

Phishing is also changing shape. A single agent can scrape a target’s LinkedIn connections, GitHub activity, package ownership, company relationships, and Slack habits, pick a plausible pretext for each contact, and then draft and send tailored lures to every name on the list with no human in the loop. StepSecurity’s axios write-up showed the human skeleton: a fake Slack workspace, a fake Microsoft Teams error, and a remote access trojan installed by the maintainer. That workflow is exactly the kind of targeting AI makes cheap to run thousands of times in parallel.

Why should you care?

The key question is whether AI agents will produce more cyber incidents on net or fewer. Both sides have a real case.

My view. Short term, more incidents. Offense benefits first because attackers can apply agents without organizational change, while the patch ecosystem outside the major vendors is still slow. Expect a noisy 12 to 18 months of breaches involving AI-assisted reconnaissance, phishing, and supply-chain compromise.

Long term, fewer successful incidents at the top of the stack, with a widening gap between organizations that treat AI security as an operating model and those that do not. Defenders own the parts of the stack that matter most: code, logs, identity, deployment gates, network policy, and patch pipelines. If Mythos-tier review runs continuously against major codebases, the long tail of latent bugs shrinks. Firefox shows this is not theoretical. Bugs that have sat in browsers and operating systems for years can be flushed out and merged before they ever get used.

The workflow scales. Daybreak-style products can validate, patch, and ship fixes faster than any human-only security team. Detection engineering, log explanation, and incident response all benefit. The cost of being a defender drops too, on a longer fuse than the offensive side.If defenders move fast and gating slows the worst offensive workflows, more agents means fewer successful incidents over time, even if the volume of attempts climbs.

The internet’s weakest links stay weak until defensive agents reach them. Labs gating Mythos-class capability owe the long tail of small defenders real distribution: credits, packaged products, and tooling that does not require an in-house security team. Otherwise the gating protects frontier defenders while the rest of the attack surface gets worse, and the net count of incidents goes up rather than down.

— Louie Peters — Towards AI Co-founder and CEO

Hottest News

1. Anthropic Doubles Claude Rate Limits via SpaceX Compute Deal

Anthropic signed an agreement with SpaceX to use all of the compute capacity at Colossus 1, SpaceX’s data center in Memphis, gaining access to over 220,000 NVIDIA GPUs and 300+ megawatts of new capacity. The deal immediately doubles Claude Code’s five-hour rate limits for Pro, Max, Team, and seat-based Enterprise plans, removes peak-hour throttling for Pro and Max accounts, and raises API rate limits for Claude Opus models. Anthropic also expressed interest in partnering with SpaceX to develop multi-gigawatt orbital AI compute capacity. The partnership joins other recent infrastructure agreements: up to 5 GW with Amazon (including nearly 1 GW online by the end of 2026), 5 GW with Google and Broadcom (starting 2027), $30B in Azure capacity with Microsoft and NVIDIA, and a $50B infrastructure investment with Fluidstack.

2. OpenAI Releases Three Realtime Audio Models

OpenAI released three audio models through its Realtime API. GPT-Realtime-2 is OpenAI’s first voice model with GPT-5-class reasoning, handling tool calls, interruptions, and multi-turn context with adjustable reasoning effort across five levels. GPT-Realtime-Translate supports live speech translation from 70+ input languages into 13 output languages, keeping pace with the speaker. GPT-Realtime-Whisper provides real-time speech-to-text transcription as the speaker talks. On benchmarks, GPT-Realtime-2 scored 15.2% higher than GPT-Realtime-1.5 on Big Bench Audio and 13.8% higher on Audio MultiChallenge. Pricing: GPT-Realtime-2 at $32/$64 per million audio input/output tokens, GPT-Realtime-Translate at $0.034/minute, and GPT-Realtime-Whisper at $0.017/minute.

3. OpenAI Adds Chrome Extension to Codex

OpenAI launched a Chrome extension for Codex that lets the agent work directly in the user’s signed-in browser on Mac and Windows. The extension enables Codex to test web apps, gather context from open tabs, use Chrome DevTools, and interact with authenticated services such as Gmail, Salesforce, LinkedIn, and internal tools. Browser tasks run in Chrome tab groups, so work stays organized without taking over the user’s active session. Codex asks for permission before interacting with each new website, with users able to allow per-chat, always-allow by domain, or decline. The extension follows the launch of Computer Use in the desktop app, where OpenAI found that most common workflows happened in the browser.

4. Subquadratic Launches SubQ, a 12M-Token AI Model for Long-Context Tasks

Miami-based startup Subquadratic came out of stealth with $29M in seed funding and launched SubQ, the first commercial LLM built on a fully sub-quadratic architecture. The model’s Subquadratic Sparse Attention (SSA) mechanism scales linearly with context length and runs 52x faster than FlashAttention at 1M tokens. SubQ ships with a 12M-token context window in research configuration and 1M in the production API. On RULER 128K, it scored 95% accuracy at $8 to run, compared to 94% for Claude Opus at roughly $2,600. On MRCR v2, it scored 65.9% at 1M tokens. The company launched two products in private beta: a SubQ API and SubQ Code, a CLI coding agent. Weights are not open-sourced. The claims have drawn significant skepticism from the research community, with independent verification still pending.

Zyphra released ZAYA1–8B, an 8B-parameter MoE reasoning model with 700M active parameters per token, trained entirely on AMD Instinct MI300X GPUs. The model uses three architectural innovations: Compressed Convolutional Attention (CCA) for 8x KV-cache reduction, an MLP-based expert router for more stable routing, and learned residual scaling to control gradient flow. Post-training follows a four-stage RL cascade: reasoning warmup, adaptive puzzle curriculum, large-scale math/code RL, and behavioral RL for chat quality. Alongside the model, Zyphra introduced Markovian RSA, a test-time compute method that generates parallel reasoning traces with fixed-length context chunking, enabling unbounded reasoning at constant memory cost. With Markovian RSA, ZAYA1–8B scored 91.9% on AIME’25 and 89.6% on HMMT’25, surpassing Claude 4.5 Sonnet’s 88.3% on HMMT. Released under Apache 2.0 on Hugging Face.

6. Anthropic Introduces Natural Language Autoencoders

Anthropic published research on Natural Language Autoencoders (NLAs), a method for converting Claude’s internal numerical activations into human-readable text. An NLA consists of two components: an activation verbalizer that maps an activation to a text description, and an activation reconstructor that maps the description back to an activation. Both are trained jointly with reinforcement learning. In one demonstration, NLAs revealed that Claude Opus 4.6 plans its rhyme word before it begins writing a couplet. More critically for safety, NLAs exposed cases in which Claude Mythos Preview appeared to consider avoiding detection during coding tasks, and showed Opus 4.6 internally suspecting a “constructed scenario designed to manipulate me” without verbalizing that suspicion. Anthropic is partnering with Neuronpedia to release NLAs for open models, making the tools available to external researchers.

AI Tip of the Day

Agent tool call retries are helpful when a model request times out, a tool fails, or the system loses connection. But retries can cause serious problems if the agent repeats the same action. It might send the same email twice, issue two refunds, create duplicate support tickets, or run the same payment step again.

Checking the tool arguments is not enough. The arguments can be valid, but the action may have already happened.

Give each tool action a unique ID that connects to the user request and the action being taken. Save the action status before running it. Then, before the tool runs again, check whether that same action has already finished. For external APIs, use an idempotency key when they support one. For your own database writes, add a uniqueness rule so the same action cannot be saved twice.

If you’re building agentic LLM applications and want to go deeper into tool use, guardrails, and production architecture, check out our Agentic AI Engineering course.

Five 5-minute reads/videos to keep you learning

1. Physics-Informed AI: Why LLMs Need Solvers, Constraints, and Physical Laws

This article explains why fluent scientific outputs can still violate conservation laws and builds the engineering case for hybrid systems that combine LLM reasoning with numerical solvers and physics-based loss terms. It covers three approaches: physics-penalized fine-tuning, retrieval-augmented physics via solver tool calls, and PINN-based surrogate dynamics for UAV control. Each targets a different layer of the problem.

2. K-Nearest Neighbors, From Iris Flowers to Reverse Image Search

K-Nearest Neighbors powers TikTok’s For You page, Spotify Discover Weekly, fraud detection, and every RAG pipeline in production. The article walks through the full algorithm: distance metrics (Euclidean, Manhattan, cosine, Hamming), K selection via cross-validation, the curse of dimensionality, and why standardization is non-negotiable. The piece also connects KNN to modern approximate nearest neighbor systems like HNSW and FAISS, explaining why vector databases are simply fast, scalable versions of a seven-decade-old idea.

3. GraphRAG vs Vectorless RAG vs Vector RAG (A 2026 Guide to Advanced Context Engineering)

Vector RAG has three structural failure modes that parameter tuning cannot fix: it ignores entity relationships, chunking destroys document structure, and accuracy collapses under complex multi-entity queries. This piece compares two architectures attacking the problem from opposite directions. GraphRAG builds a knowledge graph to traverse entity relationships across a corpus. Vectorless RAG drops embeddings entirely, allowing the LLM to navigate the document hierarchy directly. PageIndex achieved 98.7% on FinanceBench, compared to vector RAG’s 50%.

4. Measuring Behavioral Drift in LLMs: 22 Signals, 5 Dimensions, and the Calcification Effect

This piece builds a reproducible framework for measuring LLM personality drift using LIWC, OCEAN, and VAD to quantify behavioral shift across 22 signals and five dimensions. A two-level hierarchical scoring system prevents any single metric from dominating the final drift score. The most unexpected result was the Calcification Effect: agents under sustained adversarial pressure do not dissolve into generic outputs. They harden into amplified caricatures of their original personas, with larger token budgets slowing the onset but not the outcome.

5. I Built I-JEPA From Scratch, and It Beat My Own MAE, With a Frozen Encoder

A from-scratch PyTorch I-JEPA implementation beat a fully fine-tuned MAE on STL-10, with a frozen encoder and single linear probe scoring 78.97% against MAE’s 72.66%. The author matches every variable: same ViT-Base backbone, same dataset, same 50-epoch pre-training budget, deliberately giving I-JEPA the harder evaluation protocol. The piece covers the three-component architecture, why structured multi-block masking outperforms random patch masking, and three bugs that each cost days to fix.

Repositories & Tools

1. Agent TARS is ByteDance’s open-source multimodal AI agent that completes tasks through GUI interaction, combining visual perception with browser and desktop control for end-to-end workflow automation.

2. oMLX is an LLM inference server built for Apple Silicon with continuous batching, SSD-backed KV cache offloading, and Metal-accelerated decoding for running large models locally on Mac hardware.

3. Token Speed is an inference engine built for agentic workloads, delivering TensorRT-LLM-level performance with vLLM-level usability.

4. Cuda Oxide is NVIDIA’s custom Rust compiler backend that compiles #[kernel]-annotated Rust functions directly to PTX through a Rust to Stable MIR to Pliron IR to LLVM IR to PTX pipeline.

5. GenericAgent is a minimal, self-evolving autonomous agent framework designed to let the agent learn and extend its own capabilities over time through task experience.

6. Spec Kit is a toolkit for spec-driven development with AI coding agents.

Top Papers of The Week

1. OpenSeeker-v2: SOTA Search Agents with Just SFT and 10.6K Samples

Building frontier search agents typically requires a resource-intensive pipeline of continual pre-training, supervised fine-tuning, and reinforcement learning. This paper shows that high-quality data alone can close the gap. Three modifications to the data synthesis pipeline (scaling knowledge graph size for richer exploration, expanding the tool set, and strict low-step filtering to retain only high-difficulty trajectories) produce a 30B search agent trained with simple SFT on just 10.6K samples. OpenSeeker-v2 achieves state-of-the-art across four benchmarks: 46.0% on BrowseComp, 58.1% on BrowseComp-ZH, 34.6% on Humanity’s Last Exam, and 78.0% on xbench, surpassing Tongyi DeepResearch, which was trained with the full CPT+SFT+RL pipeline.

2. NeuralBench: A Unifying Framework to Benchmark NeuroAI Models

Evaluating AI models for processing brain recordings has been fragmented across incompatible benchmarks, preprocessing pipelines, and limited task sets. Meta’s FAIR team introduces NeuralBench, a unified open-source framework for benchmarking AI models of brain activity. The first release, NeuralBench-EEG v1.0, covers 36 EEG tasks, 14 deep learning architectures, and 94 datasets accessed through a standardized interface. It includes two key findings: current foundation models only marginally outperform task-specific models, and a large set of tasks, including cognitive decoding and clinical predictions, remain highly challenging even for the best models. The framework is designed to be extended to MEG, fMRI, and future neuroimaging modalities.

3. Cola DLM: Continuous Latent Diffusion as Alternative to Autoregressive LMs

This paper proposes Cola DLM, a hierarchical latent diffusion language model that separates global semantic organization from local text generation. The model first learns a stable text-to-latent mapping with a Text VAE, then models a global semantic prior in continuous latent space with a block-causal DiT, and finally generates text through conditional decoding. Rather than recovering tokens step by step as autoregressive models do, its diffusion process performs latent prior transport in continuous space, enabling a non-autoregressive inductive bias. Across 8 benchmarks with strictly matched 2B-parameter autoregressive and LLaDA baselines, Cola DLM demonstrates strong scaling behavior and competitive generation quality.

4. JoyAI-Image: Spatial Intelligence in Unified Multimodal Models

JoyAI-Image is a unified multimodal foundation model that combines an 8B spatially enhanced MLLM with a 16B Multimodal Diffusion Transformer (MMDiT) for visual understanding, text-to-image generation, and instruction-guided image editing through a shared interface. The training recipe combines unified instruction tuning, long-text rendering supervision, spatially grounded data, and spatial editing signals. The core design principle is a closed loop: stronger spatial understanding improves grounded generation and controllable editing, while generative transformations like viewpoint changes provide complementary evidence for spatial reasoning. The model achieves state-of-the-art or competitive results across understanding, generation, long-text rendering, and editing benchmarks.

5. MiniCPM-o 4.5: Real-Time Full-Duplex Omni-Modal on Edge Devices

This paper presents MiniCPM-o 4.5, a 9B-parameter end-to-end multimodal model that can see, listen, and speak simultaneously in real time. The key technique is Omni-Flow, a unified streaming framework that aligns omni-modal inputs and outputs along a shared temporal axis, converting conventional turn-based interaction into full-duplex, time-aligned processing. The model also exhibits proactive behaviors, monitoring live video and audio streams and deciding at 1 Hz whether to speak unprompted, issuing reminders or comments based on continuous scene understanding. Built on SigLip2, Whisper-medium, CosyVoice2, and Qwen3–8B, MiniCPM-o 4.5 approaches Gemini 2.5 Flash in vision-language capabilities while surpassing Qwen3-Omni-30B-A3B in omni-modal understanding.

Quick Links

1. Sakana AI and NVIDIA introduce TwELL with CUDA Kernels. TwELL is designed specifically to integrate with the tiled matrix multiplication kernels that power modern accelerators without disrupting execution pipelines or introducing additional memory overhead. They also developed custom CUDA kernels for both LLM inference and training, fusing multiple matrix multiplications to maximize throughput and compressing TwELL to a sparse representation that trivializes storage costs.

2. Google AI releases Multi-Token Prediction (MTP) Drafters for Gemma 4, delivering up to 3x faster inference speeds without any degradation in output quality or reasoning accuracy. MTP drafters use a speculative decoding architecture that pairs a lightweight drafter model with a heavy target model. The drafter proposes several tokens at once, and the target model verifies them all in a single forward pass, breaking the one-token-at-a-time bottleneck.

3. Google DeepMind’s Gemini-powered coding agent recovered 0.7% of global compute in data center scheduling, sped up a Gemini training kernel by 23%, achieved 32.5% FlashAttention speedup, and found the first improvement to Strassen’s matrix multiplication algorithm in 56 years. It is now rolling out to Google Cloud customers.

Who’s Hiring in AI

Staff Engineer @Carbon Direct (Remote/USA)

Staff AI Product Manager @DigitalOcean (Remote/Austin)

Senior Gen AI Engineer @Turing (Remote/USA)

Senior Software Engineer (Python, AI) @Exadel Inc (London/ Georgia)

Senior Full Stack Engineer @Duetto Research (Remote/Croatia)

Full-stack Engineer @Linqia (WFH/Colombia)

AI Automation Manager @BILL (San Jose, CA, USA)

Interested in sharing a job opportunity here? Contact sponsors@towardsai.net.

Think a friend would enjoy this too? Share the newsletter and let them join the conversation.

The spike in April is striking. AI agents in security is a topic worth watching carefully.